Producer to Plugin Architect: AI Assisted Coding for Music Producers

By James Richmond

21st February 2026

Building bespoke music software with AI as scaffolding, not substitute.

Over the past year I have found myself in an unexpected position. I began developing a compositional tool called EventField as part of a wider research direction around indeterminacy, probability and generative systems. It converts text into Morse code, maps that to MIDI and hosts Audio Unit instruments, creating a modular compositional environment. It started as a personal experiment. It is now a functioning macOS application moving from alpha to beta under the EventFieldAudio banner.

What makes this interesting is not just what the software does, but how it was built. I do not come from a traditional software engineering background. I was a network engineer for years and have scripted in various contexts, and like many producers I have built hundreds of Max and Reaktor patches. But I did not know Swift when I started. I did not know how to build an AU host. I had never implemented live audio transcription inside my own application.

AI assisted coding tools changed that equation.

This article is not about hype. It is about practical use, hard limits and what happens when producers move from buying tools to building them.

Why build your own tools at all

For most of us, plugin culture has trained a particular mindset. If you need something, you search, you buy, you install. There is a vast ecosystem of instruments, delays, compressors and modulation devices. That ecosystem is extraordinary, but it is also generic. It solves common problems.

EventFieldAudio began from a different question. What if the tool reflects a compositional philosophy rather than a market category?

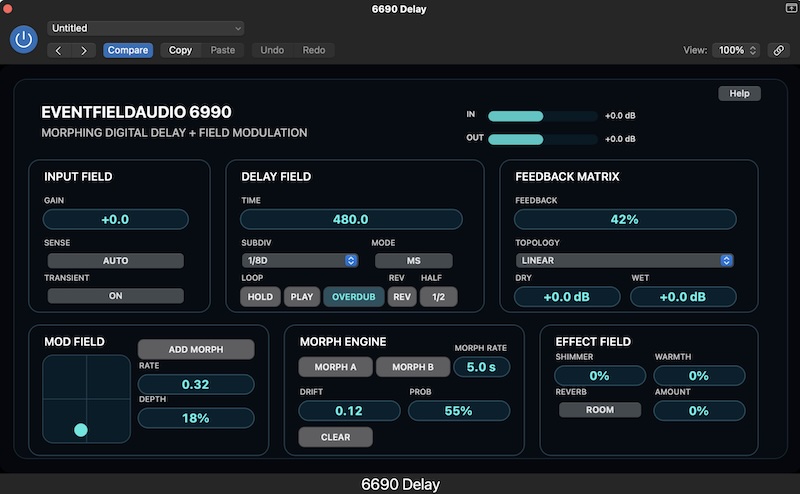

EventField is not a synth, not a sequencer and not a delay. It is a generative mapping environment. BreakChopper, another EventFieldAudio project, is not a drum slicer in the conventional sense. It is a probability driven loop mutation engine. The 6990 Delay that I am currently developing is not just a digital delay. It treats delay as a temporal field where echoes can be redistributed according to topology and probability rather than simply fed back linearly.

These tools do not exist off the shelf because they are specific to a way of thinking about music.

AI assisted development has made it possible for producers to bridge the gap between concept and implementation without becoming full time developers.

AI as scaffolding, not content

In my other professional work we have internal guidelines around AI use. I often summarise the core principle as using it for scaffolding rather than content.

Applied to music software development, that means this:

AI can help structure a project

AI can generate boilerplate

AI can explain frameworks

AI can draft code stubs

AI can debug obvious issues

But AI does not define the architecture.

AI does not decide the signal flow.

AI does not choose the compositional philosophy.

AI does not determine the interface logic.

When building EventField I used AI to accelerate learning Swift, to understand AVFoundation and to prototype AU hosting. But the mapping logic from text to Morse to MIDI was mine. The probability distributions were mine. The user experience decisions were mine.

If you allow AI to produce the content of the system rather than scaffolding the structure, you lose authorship very quickly. The tool becomes generic. The interesting part of this shift is not that machines can write code. It is that they allow humans to operate at a higher architectural level.

Practical workflow for producers

If you are a producer thinking about building bespoke tools, here is what this has looked like in practice.

1. Define the concept in musical terms

Before writing a line of code for EventField, I defined the compositional behaviour.

Text becomes symbolic rhythm

Morse becomes pulse logic

MIDI becomes generative event structure

Audio Units provide timbral realisation

For BreakChopper the concept was:

Load a loop

Segment it

Apply stochastic mutation

Export controlled variation sets

For the 6990 delay:

Treat delay time as a distribution

Introduce topology modes

Morph between behavioural states

Allow probabilistic persistence of echoes

This stage is musical, not technical. AI cannot do it for you.

2. Break the system into modules

With EventField I separated:

Text processing

Morse conversion

MIDI event generation

AU hosting

UI layer

Persistence

This modular thinking makes AI assistance far more effective because you can ask specific questions.

Instead of asking, how do I build a music app, you ask, how do I instantiate an AudioUnit plugin and route MIDI events to it.

The difference in clarity is enormous.

3. Use AI to accelerate unfamiliar territory

Live audio transcription inside EventField required exploring Apple’s speech recognition frameworks. I had no prior experience with that.

AI helped me:

Understand the API structure

Draft example implementations

Identify permissions and threading requirements

Refactor code for clarity

It did not remove the need for debugging. It did not remove the need for testing. But it compressed the research phase dramatically.

The same applied to building an AU host. Hosting Audio Units inside your own app is non trivial. There are lifecycle concerns, sandboxing issues and real time audio constraints. AI was invaluable in explaining patterns and generating initial scaffolding.

4. Refine manually

Every significant component required manual rewriting.

AI generated code is often verbose, inconsistent in style and occasionally incorrect in subtle ways. It may compile but behave incorrectly under load. It may ignore real time constraints. It may allocate memory in unsafe contexts.

You still need judgement.

In the 6990 delay, modulation smoothing, buffer management and topology routing were not copy paste exercises. They required understanding of DSP fundamentals. AI can assist but it cannot replace experience.

The realities and limitations

There are hard limits to AI assisted coding in audio.

Real time constraints

Audio code must be deterministic and low latency. AI often suggests patterns that are safe in general software but unsafe in audio threads. For example, dynamic allocation inside the render callback.

You must know enough to spot these issues.

Debugging remains yours

When something crashes in a custom AU host, the responsibility is yours. AI can suggest possibilities but you still have to trace the logic.

Surface level understanding is dangerous

It is easy to feel competent quickly. You can produce a working UI, instantiate a synth and route MIDI. But without understanding lifecycle management, threading and memory, your application will break under stress.

AI accelerates access, not mastery.

Strengths that are transformative

Despite those limits, the strengths are substantial.

Speed of iteration

BreakChopper went from concept to beta far faster than it would have five years ago. I could test variations, rewrite UI components and explore export logic without weeks of research.

Reduction of intimidation

The psychological barrier to entry has lowered. If you have built complex Max patches or Reaktor ensembles, you already think in modular systems. AI bridges the syntax gap between conceptual design and compiled application.

Architectural thinking

Perhaps most importantly, it shifts your role.

You are no longer primarily implementing algorithms. You are defining systems.

In EventFieldAudio projects I increasingly operate as an architect of behaviour. AI fills in walls and wiring. I decide where the rooms go.

Lessons from building an AU host and MIDI system

EventField forced me to engage with several deeper layers of macOS audio development.

Hosting Audio Units

AU instantiation requires careful lifecycle management

Parameter binding must be explicit

MIDI routing requires attention to timing and buffering

Preset persistence must be handled deliberately

AI helped with the syntax. It did not remove the need to understand how AUParameterTree works or how render blocks operate.

MIDI generation from symbolic input

Mapping Morse code to MIDI events required decisions about:

Timing quantisation

Velocity mapping

Polyphony rules

Channel allocation

These were musical decisions expressed in code.

Live transcription

Integrating live audio transcription opened new creative possibilities. Spoken text becomes compositional input. But it also introduced issues of latency, permissions and UI feedback.

Again, AI accelerated understanding of the framework but not the musical implications.

Where this leads for musicians

The most interesting shift is cultural.

For decades, plugin development was the domain of specialist companies and DSP engineers. Musicians consumed tools.

Now, musicians with conceptual clarity can produce bespoke systems that reflect their aesthetic.

Imagine:

A guitarist building a delay that reflects their rhythmic language

A composer creating a generative orchestration engine

A producer building a sampler that mutates material based on lyric content

A sound designer building topology driven reverbs

This does not mean everyone should become a developer. It means the barrier has lowered enough that those with specific ideas can implement them.

EventFieldAudio exists because of that shift.

Ethics and best practice

With accessibility comes responsibility.

Using AI for scaffolding rather than content is not just a slogan. It is an ethical position.

If AI writes your compositional logic, your UI decisions and your DSP behaviour, authorship becomes diluted.

In EventFieldAudio projects I use AI to:

Accelerate framework understanding

Generate structural templates

Suggest debugging strategies

I do not use it to generate the artistic logic.

Documentation should be clear about how AI was used. Transparency matters. Musicians deserve to know whether a tool reflects an intentional design or an automated assembly.

There is also a broader concern around dependency. If your development process collapses without AI assistance, you may be operating at a fragile layer of understanding. The goal should be augmentation, not substitution.

Conclusion

AI assisted coding is not magic and it is not a threat to musicianship. It is a lever.

Used carelessly, it produces generic tools and shallow understanding.

Used deliberately, it allows producers to move from consumer to architect.

EventField, BreakChopper and the 6990 delay are not impressive because they use AI. They are interesting because they express a coherent aesthetic around indeterminacy, topology and generative structure. AI simply made it feasible to build them at speed.

For producers who have long wanted custom tools that reflect their musical thinking rather than market categories, this is a significant moment.

The question is not whether AI will replace developers.

The more interesting question is whether musicians are ready to take responsibility for building their own systems.

And if they are, how deeply they are willing to understand what they build.